Vulnerability Prioritization: How to mitigate more risk with half the effort

Vulnerability management is the process of finding, assessing, remediating and mitigating security weaknesses. The vulnerability management process has as one of its main phases the vulnerability assessment, the step where vulnerabilities in the assets in scope are identified.

To do this effectively, vulnerabilities in need of patching must be prioritized. Frameworks for prioritization have evolved over the years by prioritizing based on CVSS scores, vulnerabilities with known exploits, or those from software vendors that make up mission-critical applications. This makes for pretty sophisticated whack-a-mole. Yet, according to a 2019 study conducted by the Ponemon Institute which polled 3000 security professionals in 9 countries, 60% of breaches were linked to a vulnerability where there was a patch available, but not applied, reminiscent of the Equifax mega-breach of 2017.

It’s common for teams responsible for patching to work toward resolving issues within an acceptable time or SLA, but these teams are not often aware of the flaw until another team responsible for security testing has validated the existence of the vulnerability, which might happen days after the vulnerability has remained open on a production service. Once exposed the team responsible for patching then needs to locate, test, and deploy a patch that takes time and in the context of cyber risk, exposure time only works in favor of adversaries. Findings highlighted that data silos and poor organizational coordination hampers efforts to patch known flaws by an average of 12 days and even longer for the most critical vulnerabilities – 16 days.

For a broader context, these findings are despite a 24% increase in annual spending on prevention, detection, and remediation of vulnerabilities from 2018 to 2019. With this in mind, it’s easy to understand why vulnerability management and patching have become board-level discussions.

So what’s the deal here? And are there better approaches to get through the quagmire?

Well, nodes and code are growing at an exponential rate which means a massive increase in associated vulnerabilities and despite increased spending, budgets can’t keep up. As companies digitize, many of their applications become critical and 74% of those surveys in the study said that they cannot take critical applications and systems offline to patch them quickly thus exacerbating the issue. Factors beyond resourcing that contribute to delays in vulnerability patching show that organizations are in desperate need of automating patch management and prioritizing mitigation actions.

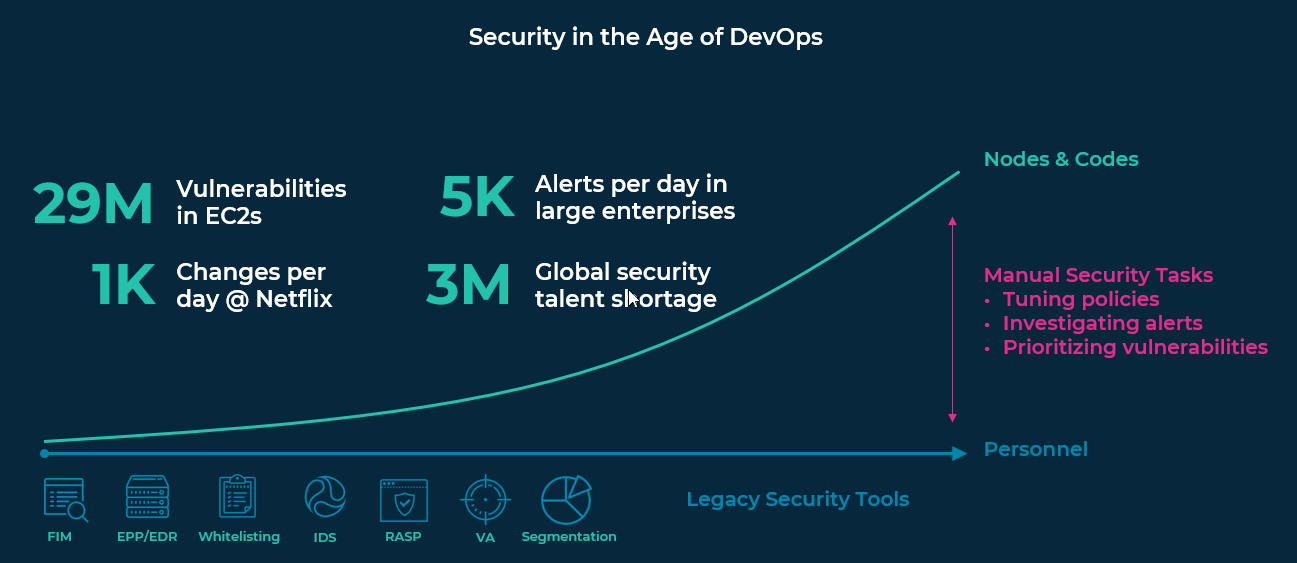

Consider the rate of growth in compute vs resources needed to manage the associated vulnerabilities:

A recently published article in SearchITOperations chronicled how, having been exposed to all of these issues, our founders launched Rezilion’s autonomous cloud workload protection platform to address these problems.

Our R&D team has found that — on average — over half of known vulnerabilities found were not loaded to memory. So, we developed capabilities to identify artifacts (code, files, packages, etc.) loaded to memory by running an agent or agentless script with access to host, container and application resources in stage or production. We then integrate our Rezilion Validate product with vulnerability scanners at different stages of the Software Development Lifecycle (SDLC) to correlate vulnerabilities from the scanner results to our mapping of memory in stage or production through a process called vulnerability validation. If vulnerable code is not loaded in memory, if it’s not “running” — it’s not exploitable, thus it can either be removed or its patching can be deprioritized. This visibility helps siloed teams improve their interactions to attest actual risk vs spending time and effort on phony risks, which tends to create mistrust between teams over time. This leads more efficient prioritization with 70% (on average) less to patch.

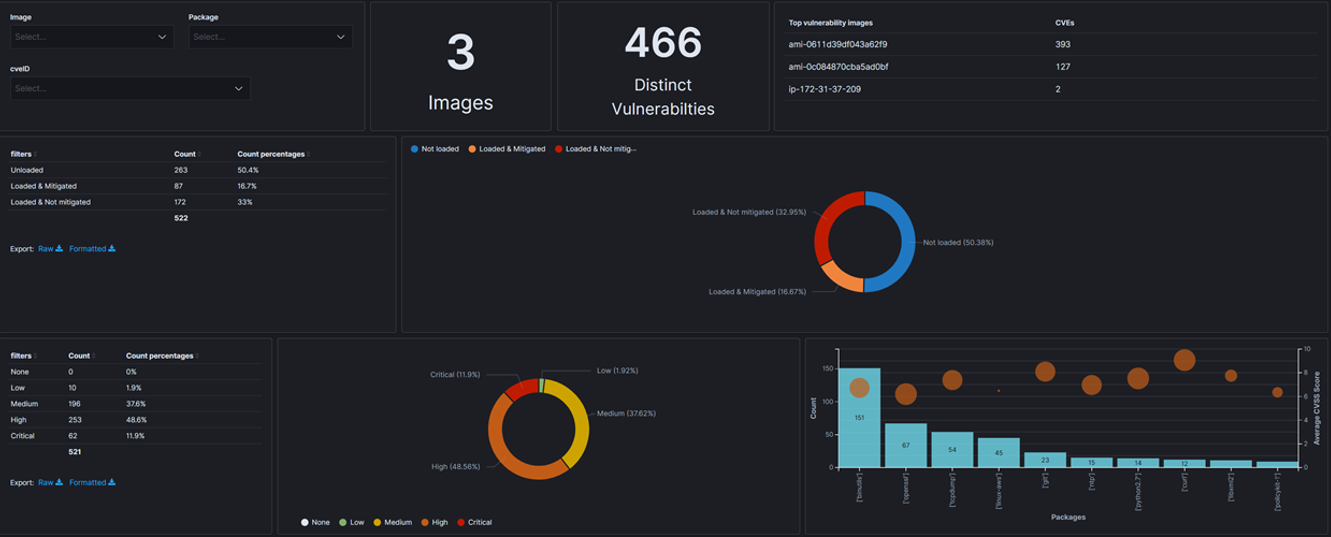

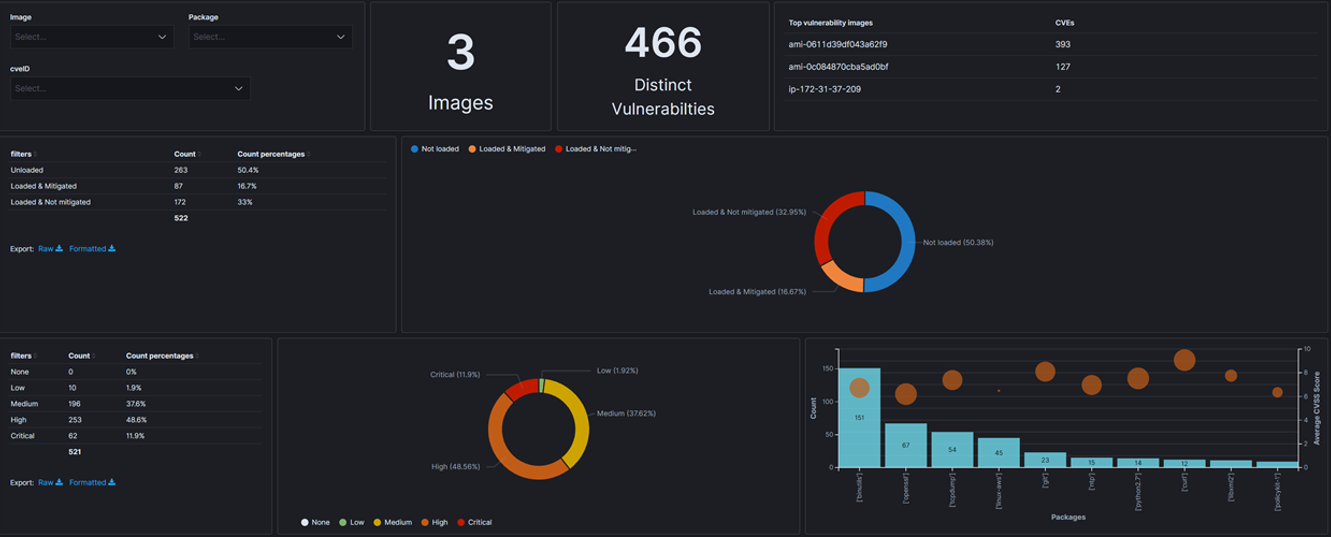

The above screenshot shows the Rezilion dashboard used to validate reported vulnerabilities and provides contextual insight about the host infrastructure, and services. In this particular example, 50.38% of the 466 distinct vulnerabilities associated with two Amazon Machine Images are not loaded to memory, illustrated in the top right pie chart. This enables our customers to determine what actions will provide the best ROI in minimizing actual risk, such as updating packages with high severity vulnerabilities loaded to memory.

Rezilion Validate plugs directly into CI/CD pipelines. A script or agent can be dropped into a pre-production or production environment to collect all the data necessary. This provides flexible implementation options that reduce obstacles and concerns relating to impacting mission critical services.

Of course, having a compensating control doesn’t mean you don’t need to patch. The good news is, Rezilion Validate reduces the effort by discounting what’s irrelevant and surfaces insight to more quickly attest and prioritize legitimate risk leveraging data from your existing scanner investments. Equally important: We buy you time, so that you don’t need to choose between patching and productivity.

The risk associated with vulnerability management diminishes with a more efficient prioritization framework but it doesn’t disappear. In a separate study conducted by the Ponemon Institute, 67% of respondents agreed that they feel they do not have the time and resources to mitigate all vulnerabilities in order to avoid a data breach. The reality is that vulnerabilities make it to production and compensating controls are needed to take action in the event of exploitation. Rezilion detects and automatically mitigates known vulnerabilities and 0-day attacks to provide runtime assurance while actions are taken to resolve the issue. This approach removes the operations friction associated with shifting left while recognizing that production services remain exposed and need protection.